A Simple Framework to Structure Any A/B Testing Interview Question - Part 1/2

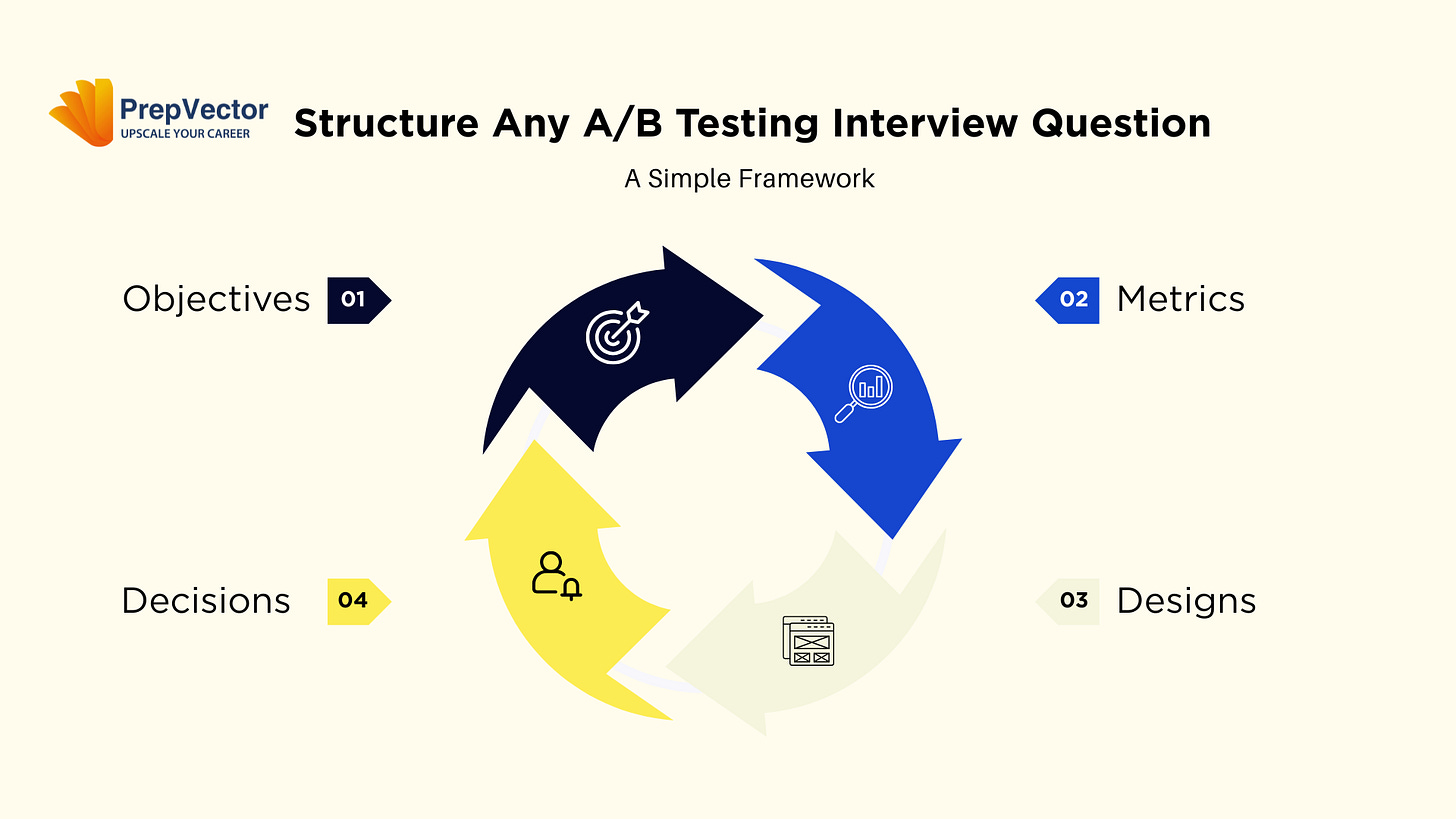

Master a simple four-step framework (Objective → Metrics → Design → Decision) to turn any experiment question into a clear, strategic answer interviewers actually care about.

I’ve watched hundreds of candidates answer A/B testing questions, and there’s a pattern to how they fail. It’s not that they don’t know statistics. Most can calculate sample sizes and explain p-values perfectly. They fail because they treat A/B testing like a math problem when it’s actually a business decision wrapped in statistics.

👋 Hey! This is Manisha Arora from PrepVector. Welcome to the Tech Growth Series, a newsletter that aims to bridge the gap between academic knowledge and practical aspects of data science. My goal is to simplify complicated data concepts, share my perspectives on the latest trends, and share my learnings from building and leading data teams.

Say the hiring manager asks: “Our checkout button is blue. Should we test making it green?”

Candidate A immediately jumps to mechanics: “Yes, we’d need to calculate the required sample size based on our baseline conversion rate, split traffic evenly, and run it until we reach statistical significance at 95% confidence...”

They’re not wrong. But they’ve already lost the interviewer.

Candidate B takes a different approach: “Before we discuss testing, I need to understand what problem we’re solving. Are we seeing abandonment at checkout? Do we have data suggesting color is affecting conversion? What’s the hypothesis behind this change?”

See the difference? The first candidate is executing. The second is thinking.

Most candidates fail A/B testing interviews not because they lack technical knowledge, but because they lack structure. They jump straight into implementation details without establishing why the test matters in the first place.

After conducting dozens of these interviews and coaching candidates through them, I’ve developed a simple four-step framework that works for any A/B testing question.

The framework is: Objective → Metrics → Design → Decision

Let me break down each component and illustrate how to apply it.

Step 1: Objective – Start With the “Why”

Before you discuss a single metric or experimental design, you need to establish what you’re trying to achieve. This seems obvious, but it’s where most candidates stumble.

When clarifying objectives, ask yourself:

What user problem are we solving?

What business metric are we trying to move?

Why do we believe this change might work?

What’s the opportunity cost of running this test MMP?

The strong response demonstrates strategic thinking. You’re not just an analyst who runs tests. You’re a partner who ensures tests align with business goals.

Strong candidates don’t just accept the question at face value. They probe the underlying motivation. In an interview setting, this demonstrates strategic thinking. In a real job, this prevents you from wasting weeks testing things that don’t matter.

Step 2: Metrics – Define Success Quantitatively

Once you’ve established the objective, you need to translate it into measurable metrics. This is where you move from strategy to specifics.

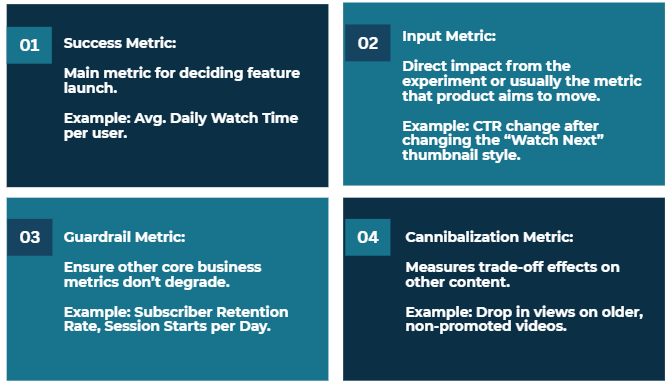

A robust metrics discussion covers three types of metrics:

Primary Metrics are your north star. If you could only measure one thing, what would it be? This metric should directly reflect your objective. If your objective is to increase user engagement, your primary metric might be daily active users or session duration. If it’s revenue growth, it might be average order value or conversion rate.

Secondary Metrics provide additional context. They help you understand the broader impact of your change. If your primary metric is conversion rate, secondary metrics might include average order value, items per cart, or time spent on product pages. These help you understand not just if something changed, but how and why.

Guardrail and Cannibalization Metrics protect you from unintended consequences. These are metrics you don’t expect to improve, but you want to ensure they don’t degrade. If you’re testing a faster checkout flow, you’d want to ensure your payment error rate doesn’t increase or customer satisfaction doesn’t drop.

Be specific about how you’ll measure. Don’t just say “user engagement.” Say “weekly active users, measured as users who complete at least one core action in a seven-day period.” This specificity shows you’ve dealt with the reality that vague metrics lead to endless debates.

Step 3: Design – Structure the Experiment

Now you get to the mechanics. Your design discussion should cover:

Randomization Strategy: How will you assign users to treatment and control? The naive answer is “50/50 split,” but strong candidates think deeper. Should you randomize at the user level or session level? If you’re testing a feature that affects network effects (like a social feed algorithm), should you consider cluster randomization?

Sample Size and Duration: How many users do you need, and how long should the test run? This isn’t just a statistical calculation, it’s a business decision. You need to balance statistical power with the cost of running the test and the opportunity cost of delaying a decision.

Treatment Variants: Is this a simple A/B test or should you consider multiple variants? Sometimes an A/B/C test with two different implementations helps you understand not just if a change works, but why.

Potential Confounds: What could corrupt your results? Seasonality? Different user segments responding differently? Network effects? Strong candidates proactively identify these issues.

This is where you prove technical competence, but because you’ve laid the groundwork with objectives and metrics, your design choices will make sense rather than seeming arbitrary.

Step 4: Decision – Plan for Action

The final step separates good candidates from great ones. Most people forget that the point of an A/B test isn’t to generate a p-value. It is to make a decision.

Your decision framework should address:

Decision Criteria: What results would lead you to ship the treatment? Don’t just say “if it’s statistically significant.” Specify the minimum detectable effect that would be worth the implementation cost. Maybe you need at least a 2% lift in conversion rate to justify the engineering resources to fully build out the feature.

Segmentation Analysis: Will you look at how different user segments responded? Perhaps the new feature works great for power users but confuses novices. Your launch decision might be to ship to power users only.

Follow-up Actions: What will you do based on different outcomes? If the test succeeds, what’s the rollout plan? If it fails, what did you learn and what will you try next? If results are mixed, what additional tests might clarify the picture?

This demonstrates product judgment, not just statistical knowledge. You’re showing that you can take experimental results and turn them into concrete business actions.

Why This Framework Works

This framework works because it mirrors how product teams actually make decisions.

By starting with objectives, you demonstrate strategic thinking. By defining metrics carefully, you show you understand measurement challenges. By designing thoughtfully, you prove technical competence. And by planning your decision criteria upfront, you show you’re focused on outcomes, not just process.

Interviewers are trying to assess whether you’d be effective in the role. This framework proves you would be, because it shows you understand that A/B testing is ultimately about reducing uncertainty in product decisions, not about running statistical tests for their own sake.

See the Framework in Action

In Part 2 of this series, I will walk through a complete interview question and demonstrate how to structure your responses better by using this framework.

Final Thoughts

A/B testing interviews are trying to assess whether you can think clearly about experiments in ambiguous situations. The candidates who excel aren’t necessarily those with the most advanced statistical knowledge. They’re the ones who can structure problems, ask clarifying questions, and connect experimental design to business outcomes.

The next time you face an A/B testing interview question, remember: Objective → Metrics → Design → Decision. This simple framework will help you deliver a structured, thoughtful response that demonstrates exactly what interviewers are looking for.

Ready to see it in action?

If you’d like to dive deeper into experimentation, here are a few of our learning programs you might enjoy:

A/B Testing Course for Data Scientists and Product Managers

Learn how top product data scientists frame hypotheses, pick the right metrics, and turn A/B test results into product decisions. This course combines product thinking, experimentation design, and storytelling—skills that set apart analysts who influence roadmaps.

Advanced A/B Testing for Data Scientists

Master the experimentation frameworks used by leading tech teams. Learn to design powerful tests, analyze results with statistical rigor, and translate insights into product growth. A hands-on program for data scientists ready to influence strategy through experimentation.

Master Product Sense and AB Testing, and learn to use statistical methods to drive product growth. I focus on inculcating a problem-solving mindset, and application of data-driven strategies, including A/B Testing, ML, and Causal Inference, to drive product growth.

Not sure which course aligns with your goals? Send me a message on LinkedIn with your background and aspirations, and I’ll help you find the best fit for your journey.