Explain Like I am 5 | Day 16 : What are secondary and guardrail metrics in A/B testing?

Explain Like I’m Five Series | Lesson 16 : Success/Primary Metrics in A/B Testing? One core experimentation concept, explained with clarity and practical intuition.

In the series “Explain like I’m 5” we are breaking down the concepts of A/B testing that can be easily understood by even a 5-year-old.

👋 Hey! This is Manisha Arora from PrepVector. Welcome to the Tech Growth Series, a newsletter that aims to bridge the gap between academic knowledge and practical aspects of data science. My goal is to simplify complicated data concepts, share my perspectives on the latest trends, and share my learnings from building and leading data teams.

Last Post: Primary Metric in A/B Testing

You have an old bicycle, and you want to test a new one to see if it’s better.

We already discussed primary metrics in last post, so it will be

“Do you ride the new bike more?”

You measure: How many minutes do you ride each day?

Result: Old bike = 20 minutes per day

Result: New bike = 40 minutes per day

So yes, the new bike is better.

Secondary Metrics

These will be other cool stuff you’ll notice:

Are you having more fun? Yes

Do you go farther? Yes

Does it make your legs hurt? Yes

What this means: The new bike is a win! Sure, your legs get tired faster, but you are having way more fun and riding longer.

Guardrail Metrics

The things that would make us say “NO” and stop using it:

Did you get hurt or crash more? No

Does the bike break easily? No

Is the new bike dangerous in any way? No

What this means: All the safety rules pass. The bike is safe.

In A/B testing terms

In last post we discussed the importance of primary metrics in A/B testing setup

The problem with focusing exclusively on a single metric is that the real world is complex. Your experiment might improve revenue but tank user satisfaction. This is where secondary and guardrail metrics come in.

Every well-designed experiment operates with all three layers working together:

Primary metric — the single number your experiment is designed to move. It determines whether you won or lost. Defined before the test. Non-negotiable after it.

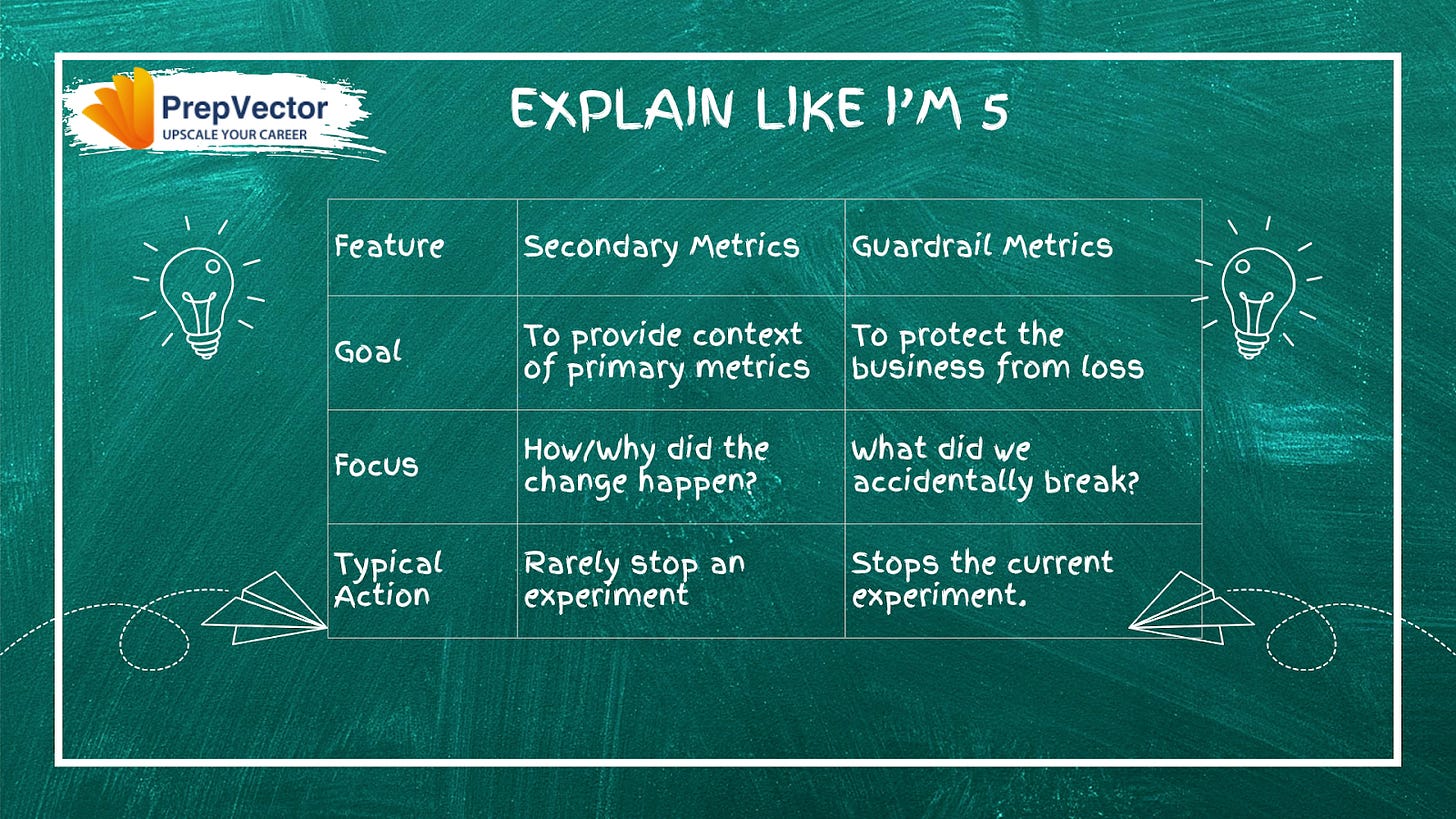

Secondary metrics — the supporting measurements that give context to your primary metric. They’re still tied to business value, and they help you understand the mechanism behind the primary result. But they won’t stop an experiment on their own, and they never override a primary metric verdict.

Guardrail metrics — the safety net. They prevent what practitioners call “bad wins” — tests that look successful on the surface while quietly degrading something important underneath. Guardrail metrics have strict thresholds, and if they worsen beyond those thresholds during the experiment, you stop the test.

A Closer Look at Secondary Metrics

Secondary metrics are important business outcomes you care about, but they’re not the primary reason you ran the test. They tell you the full story of what your experiment did beyond your headline metric.

But unlike your primary metric, a negative change in a secondary metric alone won’t kill your experiment.

Say you’re running an A/B test on a new homepage layout. Your primary metric is revenue per session. A natural secondary metric is average order value — it’s closely tied to business value, it moves in response to the same user behaviors, and understanding whether it shifts helps you interpret what’s actually driving the revenue change.

If revenue per session goes up and average order value goes up too, you know users are spending more per purchase — not just buying more frequently. That’s a different insight than if revenue per session goes up while average order value stays flat, which would suggest the layout is driving more purchases at the same spend level. Same primary metric win, very different underlying story.

Other common secondary metrics depending on your test type include time on page, scroll depth, feature adoption rate, repeat visit rate, and add-to-cart rate. The right secondary metrics are the ones that directly relate to the mechanism of your change — the behavioral steps between your intervention and your primary outcome.

A Closer Look at Guardrail Metrics

Guardrail metrics are your safety mechanisms. They protect you from wins that come at an unacceptable cost. They represent hard boundaries. If a guardrail metric gets worse, you stop the experiment, regardless of what your primary metric shows.

Consider a test where you’re optimizing email CTR with a more aggressive subject line strategy. CTR goes up 18%. That’s your primary metric, and it’s a clear win.

But what’s happening to your unsubscribe rate? If that climbs from 1.2% to 2.8% over the course of the test, you have a serious problem brewing — one that the CTR number would never reveal on its own. A guardrail threshold of, say, unsubscribe rate must stay below 2% would have flagged this automatically and prompted you to pause before the damage compounded.

This is what separates guardrail metrics from secondary metrics in practice. Secondary metrics are things you hope will move positively. Guardrail metrics are things you are committed will not move negatively beyond a defined limit. The distinction is directional and categorical — not just semantic.

Common guardrail metrics by test type include the following. For conversion rate tests: revenue per user, return rate, and customer support contact rate. For engagement tests: session abandonment, unsubscribe rate, and churn. For performance tests: page load time, error rate, and crash rate. For pricing tests: customer lifetime value, refund rate, and payment failure rate.

A Framework for Choosing Your Metrics

Before running your next experiment, ask yourself:

What’s the one thing this test needs to prove? (Primary metric)

What other business outcomes matter, but won’t kill the experiment? (Secondary metrics)

What could go catastrophically wrong, and how would we measure it? (Guardrail metrics)

Stay tuned for the next post to learn more about this framework.

Next Post: Framework for Primary, Secondary and Guardrail Metrics

If you’d like to dive deeper into experimentation, here are a few of our learning programs you might enjoy:

A/B Testing Course for Data Scientists and Product Managers

Learn how top product data scientists frame hypotheses, pick the right metrics, and turn A/B test results into product decisions. This course combines product thinking, experimentation design, and storytelling—skills that set apart analysts who influence roadmaps.

Advanced A/B Testing for Data Scientists

Master the experimentation frameworks used by leading tech teams. Learn to design powerful tests, analyze results with statistical rigor, and translate insights into product growth. A hands-on program for data scientists ready to influence strategy through experimentation.

Master Product Sense and AB Testing, and learn to use statistical methods to drive product growth. I focus on inculcating a problem-solving mindset, and application of data-driven strategies, including A/B Testing, ML, and Causal Inference, to drive product growth.

Not sure which course aligns with your goals? Send me a message on LinkedIn with your background and aspirations, and I’ll help you find the best fit for your journey.