Explain Like I am 5 | 7-Day Summary

Explain Like I’m Five Series | Understanding Null and Alternate Hypotheses and MDE. Understanding P-value. What is the level of significance?

In the series “Explain like I’m 5” we are breaking down A/B testing topics that can be understood by even a 5-year-old.

👋 Hey! This is Manisha Arora from PrepVector. Welcome to the Tech Growth Series, a newsletter that aims to bridge the gap between academic knowledge and practical aspects of data science. My goal is to simplify complicated data concepts, share my perspectives on the latest trends, and share my learnings from building and leading data teams.

In last few posts we read about:

What are Null and Alternate Hypothesis

Following is the quick revision of all three topics

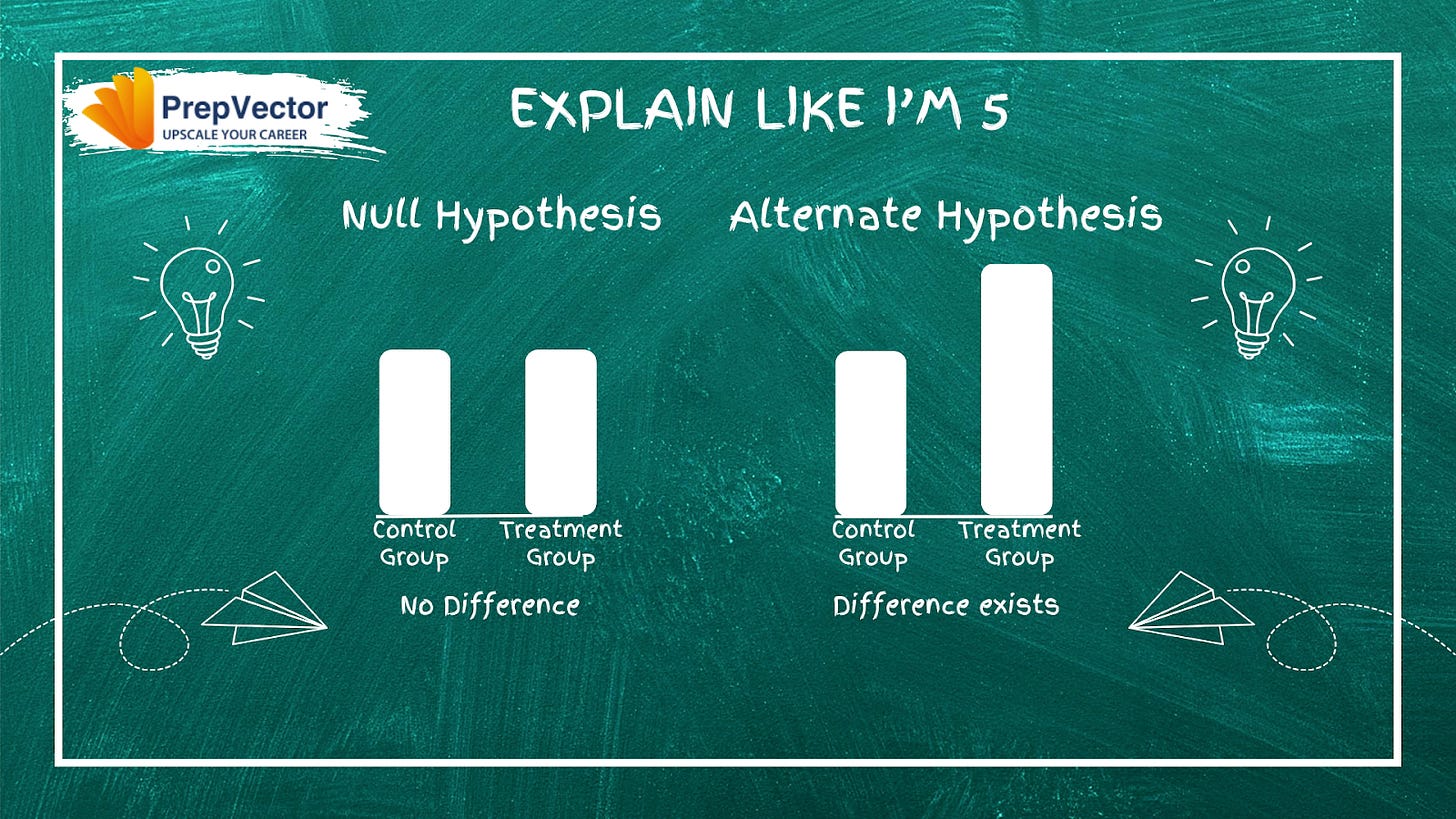

What are Null and Alternate Hypotheses?

Null and Alternate Hypotheses

Null Hypothesis (Ho): The hypothesis that there is no difference between the control group and the treatment group. It assumes that any observed difference is due to random chance, not the actual intervention.

Example: The new checkout button has no effect on conversion rate.

Alternate Hypothesis (H1): The hypothesis that there is a meaningful difference between the groups. This is what you’re trying to prove with your test.

Example: The new checkout button increases conversion rate.

In A/B testing, you collect data to gather evidence against the null hypothesis. If your results are statistically significant (typically p-value < 0.05), you reject H₀ and conclude that the alternate hypothesis is supported.

What is MDE (Minimum Detectable Effect)?

MDE is the smallest effect size (difference between control and treatment) that your test is powered to detect with statistical significance. In other words, it’s the minimum improvement you care about finding.

Example:

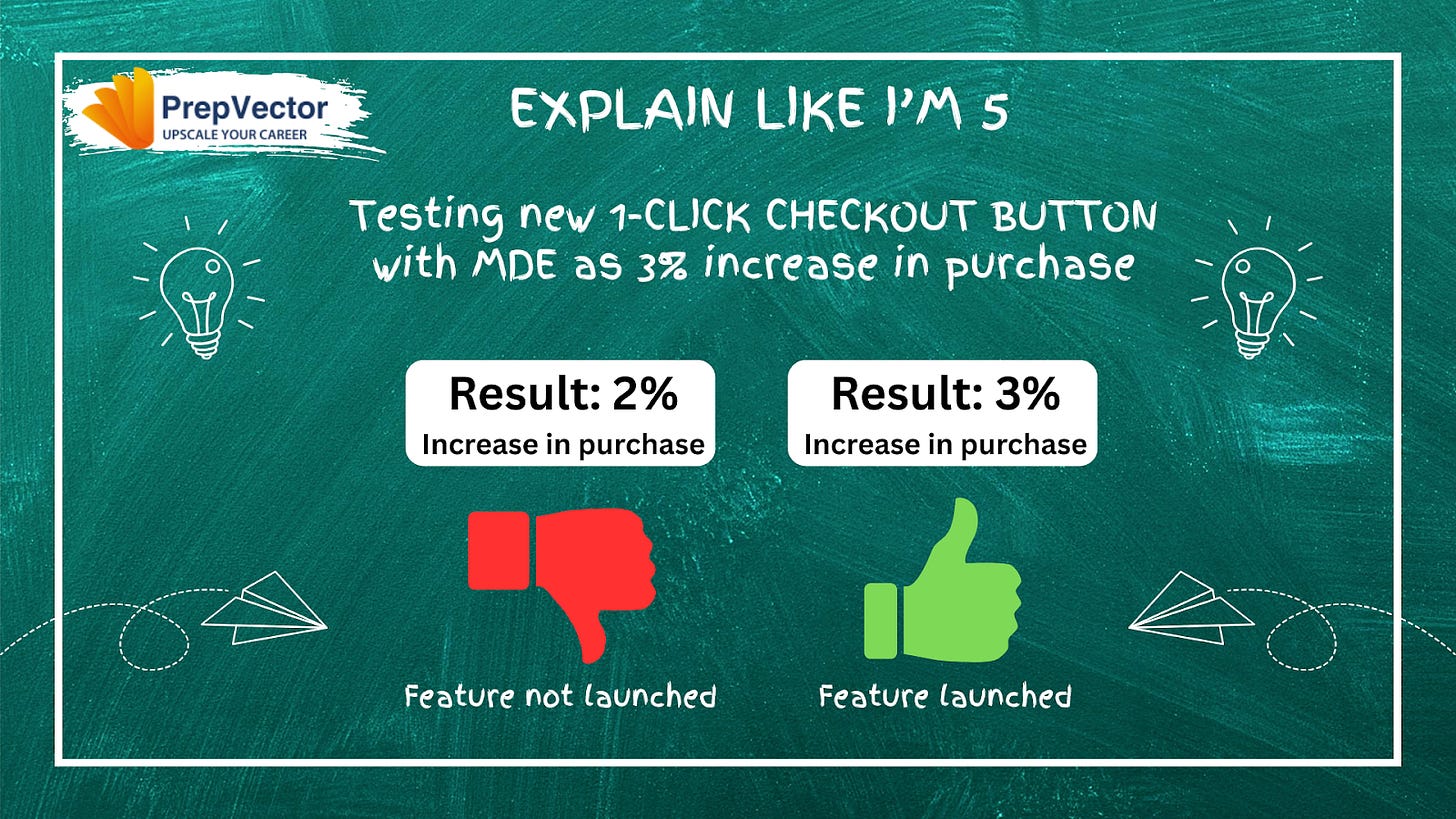

An ecommerce company wants to test if a new “1-Click Checkout” button increases purchases.

H0 (Null Hypothesis): The new checkout button does not change the purchase rate.

H1 (Alternative Hypothesis):The new checkout button changes the purchase rate.

MDE (Minimum Detectable Effect): The team decides that only a 3% increase in purchases would make the feature worth launching.

Using this MDE, you calculate the required sample size and run the experiment. Then you compare purchase rates between control and treatment.

If treatment and control has a positive difference of more than 3% and the difference is statistically significant then we reject Ho i.e. the new checkout button changes the purchase rate.

Complete Flow

Set your hypothesis - Choose an MDE that matters for your business - Calculate required sample size - Run test - Check if results reject Ho and exceed your MDE.

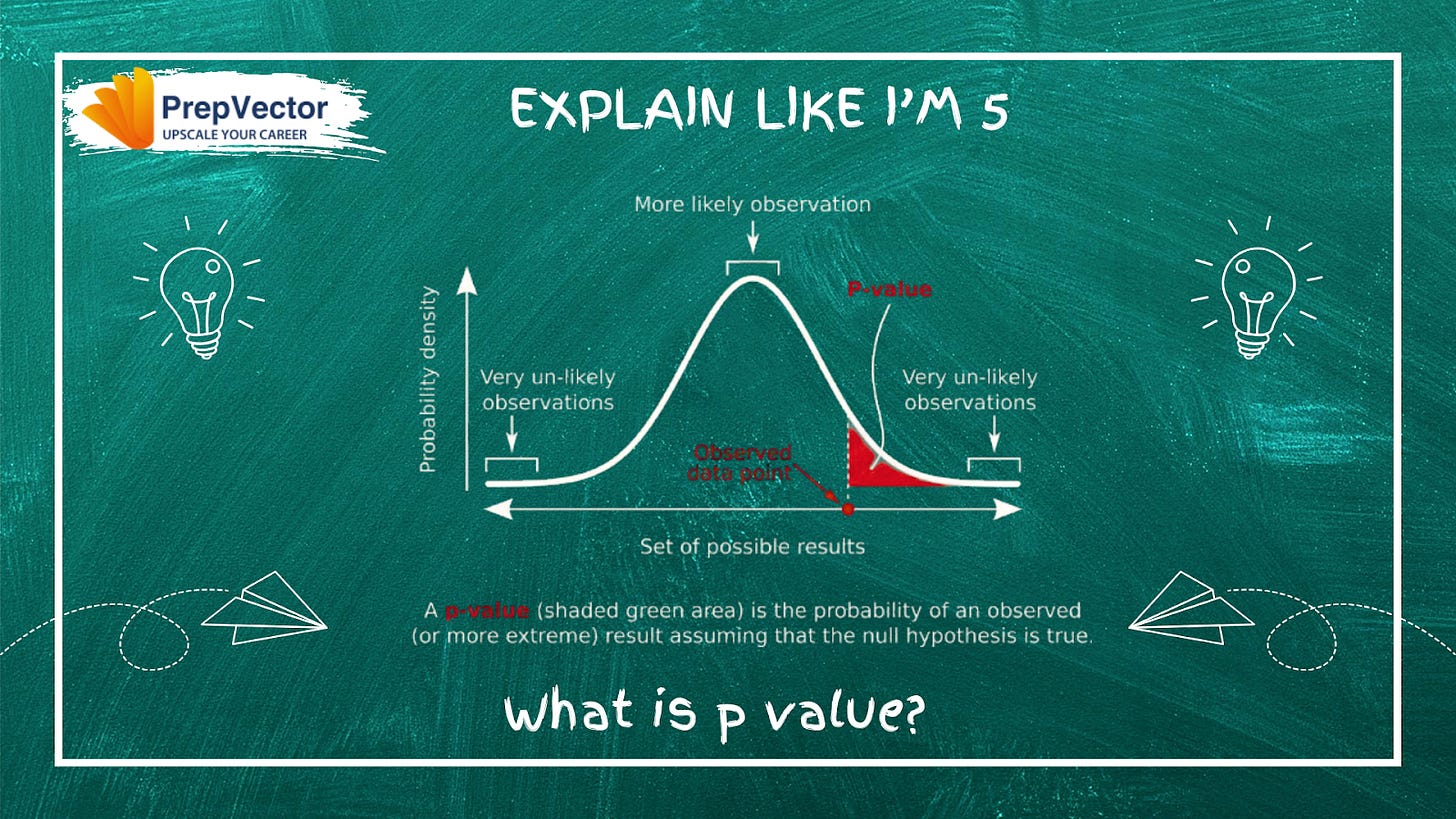

What is p value?

A p-value is the probability of observing a result as extreme (or more extreme) than what you actually observed, assuming the null hypothesis is true.

In simpler terms: How surprising is your result if there’s actually no real difference between the control and treatment groups?

How to Interpret P-Values

P-value < 0.05 (typically):

There’s less than a 5% chance you’d see this difference by random luck alone. You reject the null hypothesis (H₀)

Conclusion: There likely is a real difference between groups. Your result is statistically significant

P-value ≥ 0.05:

There’s a 5% or greater chance this difference occurred by random chance. You fail to reject the null hypothesis (H₀)

Conclusion: You don’t have enough evidence to claim a real difference. Your result is not statistically significant

Example

Imagine you’re A/B testing an email subject line:

Control group: 10% open rate (1,000 emails)

Treatment group: 10.5% open rate (1,000 emails)

You get p-value = 0.15

Conclusion: There’s a 15% chance you’d see a 0.5% difference (or larger) even if the subject lines are truly identical. That’s too high, so you can’t confidently say the new subject line is better. You’d fail to reject Ho

An important point is that a tiny p-value doesn’t always mean business impact. A +0.5% CTR improvement might also have a p-value of 0.001, but the actual revenue gain could be negligible. Always check both the p-value and the real business effect.

Few useful resources on what are Null and Alternate Hypotheses?

Null & Alternative Hypotheses | Definitions, Templates & Examples

Hypothesis Testing explained in 4 parts

Few useful resources on MDE?

What is minimal detectable effect? How it impacts experiment results

Minimum Detectable Effect (MDE) • SplitMetrics

Few useful resources on what is P-value?

Understanding P-values | Definition and Examples

P-values Explained By Data Scientist | Towards Data Science

If you’d like to dive deeper into experimentation, here are a few of our learning programs you might enjoy:

A/B Testing Course for Data Scientists and Product Managers

Learn how top product data scientists frame hypotheses, pick the right metrics, and turn A/B test results into product decisions. This course combines product thinking, experimentation design, and storytelling—skills that set apart analysts who influence roadmaps.

Advanced A/B Testing for Data Scientists

Master the experimentation frameworks used by leading tech teams. Learn to design powerful tests, analyze results with statistical rigor, and translate insights into product growth. A hands-on program for data scientists ready to influence strategy through experimentation.

Master Product Sense and AB Testing, and learn to use statistical methods to drive product growth. I focus on inculcating a problem-solving mindset, and application of data-driven strategies, including A/B Testing, ML, and Causal Inference, to drive product growth.

Not sure which course aligns with your goals? Send me a message on LinkedIn with your background and aspirations, and I’ll help you find the best fit for your journey.